The Inference Engine

Until recent years, computers have been generally thought of as command-driven machines. Men and women built them and wrote software that provided a detailed set of instructions or rules that determined if a certain thing happened, then a certain action would be triggered. Software applications have grown increasingly sophisticated, but they are still a set of commands. As a result, computers have been limited by human speed and imagination, as machines could only perform the instructions that they had been given. Computers have also been eminently predictable. You could look at the output and easily understand what the process was to arrive at that point. Even though computers have been able to process these instructions with increasing speed as hardware advances—and the instructions have increased complexity as software and algorithms advance—human instruction has been the limiting factor. This governing logic has ruled computing since Ada Lovelace and the beginning of computing two centuries ago.

Machine learning and artificial intelligence (AI) changes this fundamental paradigm of how computers execute instructions and how they interact with humans. Machine learning and AI are two related and sometime synonymously used terms. We view them as two different points on a spectrum of technological development, and for this discussion will focus on machine learning, as that will be the root of most changes that we see in the next few years. The fundamental change at the core of machine learning is the transition from a human-command-driven engine to a machine-inference engine. An inference engine is a piece of software that applies logical rules to a data set to infer new information. This shift is extremely significant, as it results in software that is becoming increasingly capable of developing and refining its own instructions to performing tasks without the limiting factor of a human being to provide instructions.

As machine learning systems become increasingly capable, we are moving away from predictable, strict processes and outcomes. A computer system can now run comparable probabilities and projections based on the information it gathers every time it makes a decision. This will enable new types of problem solving and the ability to process more information in new and complex ways, enabling entirely new types of machine capabilities. It can also create new challenges, as the constant gathering of millions of pieces of data can result in different answers to the same problem, and software engineers may no longer be able to retroactively map the algorithm followed to understand how a system arrived at a certain point.

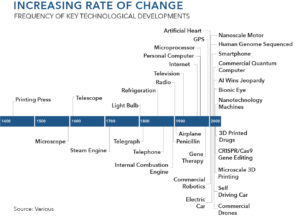

The latest iteration of software development has focused on mobile, cloud, and location-based technologies; the next will be based on machine learning. Machine learning is the next evolution of software development. It will be applied to the majority of our existing software systems—from maps and digital assistants to accounting software. When computers are able to infer new instructions from both data inputs and prior outcomes, the machine-learning framework allows software to make more refined decisions over time, significantly increasing the value of large sets of data.

These developments are already in production in many cases, and software engineers are already developing advanced algorithms with machine-learning capabilities that will continue to get more and more complex and capable over time. Those machine-learning capabilities have massive application potential. They can be integrated into every software platform in use today and will soon impact every software interaction in our daily lives—from healthcare to finance, from education to social media, from QuickBooks to Siri to weather forecasting. The magnitude of this transformation is akin to the initial wave of software applications in the 1980s and 1990s, when software first allowed for the interaction between people and computers at large scale. The emerging transformation driven by machine learning will change the paradigm of human interaction with software.

Much of the media hysteria in this field is focused on the risks of advanced “general” artificial intelligence systems. While many of these stories address very real issues, they skip over the more near-term and tangible effects that we will see when even simple machine learning algorithms cascade through all of our software applications.

Economic Implications of Machine Learning

Machine learning will affect every industry and government organization that uses software. This, of course, includes everyone. In some instances, these effects will be incremental; in others they have the potential to be transformational.

One area where machine learning will be especially impactful is transportation, as it will allow the industry to optimize resources in a way it has not yet been able to achieve. For instance, imagine in the current transportation environment that we have a chaotic system of 40-passenger buses that follow fixed routes; ad-hoc carpool networks that might carry a maximum of 7 passengers—15 for someone who owns a passenger van—but are usually only partially filled; and individual drivers with 3 or 4 seats completely open in their cars. Machine learning would allow a computer system to better anticipate demand, resulting in more efficient routes and reducing the number of empty seats on the road. This creates a more effective use of existing infrastructure. Machine learning has also made possible self-driving cars, which will see widespread use in upcoming years. Autonomous vehicles, enabled by machine learning algorithms, will learn from each other’s experience on a daily basis to build more efficient routes based on traffic and weather.

Similarly, new logistics systems—like package and grocery delivery—that spring up to support delivery in large urban areas will be enabled by machine learning, resulting in efficient and optimized delivery routes. We will begin to see an overall optimization of integrated traffic management and transportation systems stemming from computers’ machine-learning-enabled capabilities to process massive data sets in real time, which is far beyond the capabilities of city planners and distribution managers. Machine learning systems can analyze all of the process requirements and environmental characteristics within a delivery network at once, leading to a faster and far more efficient system for transporting goods.

Machine learning applied to medical science can also bring advances in modern medicine, diagnostics systems, drug research, and nutrition. Doctors will be able to leverage new models of computing to incorporate a more comprehensive, data-driven view of a patient’s health into their diagnosis. Environmental factors, historical factors, patient history, family medical history, and more can be included in these algorithms to make a much more detailed projection than what a single doctor could possibly accomplish through an oral history. From a more tactile perspective, robotics are already utilized in eye surgery and will continue to advance surgical success rates, opening the door to other surgical procedures.

Even fields that are typically built upon the foundation of human creativity will be impacted by this catalyst. In 2016, Sci-Fi London’s 48-Hour Film Challenge produced a short film titled Sunspring that had been authored by a computer using a recurrent neural network (RNN) called long short-term memory (LSTM). Oscar Sharp, a BAFTA-nominated filmmaker, collaborated with Ross Goodwin, an AI researcher at NYU. Sharp and Goodwin named the AI bot they created to write the film Jetson, but it eventually renamed itself Benjamin. They fed Benjamin a “corpus” of sci-fi film and television scripts from the 1980s and 90s to train him in dialogue and narrative. Benjamin then wrote the entire screenplay for Sunspring, including stage directions, and the script was interpreted and filmed by Sharp and three actors brought on for the project. The result is an intense, strange film set in a dark future. The film also contains a song with lyrics written by Benjamin, using a database of 30,000 folk songs fed to him by Sharp. The film was deemed by critics a “beautiful, bizarre sci-fi comedy” and picked up for digital premiere by Ars Technica. Sunspring was nominated into the top ten final films at Sci-Fi London—at which point Benjamin, the AI, assured himself a win by submitting 36,000 votes per hour in the last few hours of voting.

Creativity and Labor

From a basic human perspective, these technologies bring the potential for all of us to unleash brand new ways of being creative, tapping into the human brain in revolutionary ways. The introduction of every tool from the Stone Age forward has undoubtedly met with skeptical concerns that it would cause humans to grow lazy, weaker, and less intelligent. Hydraulics, it was thought, would keep people from working hard. Calculators would make people forget how to do long division. In reality, when new tools take on the menial tasks, the human brain is free to imagine and create in ways that would have otherwise been lost to long division or lengthy tedious tasks. It allows us to think through things on a higher level.

Of course, this begs the question of what types of jobs will be lost, and how the workforce will be impacted by the increasing adoption of machine learning technology.

Join the Catalyst Monitor

Join our community, where we push out regular insights to help maintain situational awareness on technological and socioeconomic trends.